Follow @BikerJaredThe phrase “big data” is used so often it’s almost trite. These days, nearly all large enterprises have established a data science or data integration practice that is used for analysis projects. In my experience, however, many smaller companies (or often smaller teams within large enterprises) have yet to adopt any sort of big data practice. Consequently, this makes it difficult to do many of the excellent analyses we post on this blog. The goal of this post is to help those on smaller teams who want to start analyzing big data and need a little help getting an environment up to suit their needs.

You’d be surprised at how many people I’ve spoken with who would like to get started with Big Data, but haven’t actually started collecting any data. Obviously, getting some data is the first step and the more data you have, the more flexibility you have to analyze it the future. The great thing is that data storage is cheap – storing 1 TB of data in S3 with full redundancy and easy access at $23 dollars / month is a price that nearly any team can afford.

If you’re a customer of the Adobe Experience Cloud, a great place to start getting data is Analytics data feeds. Beyond that, you’ll eventually want to pull any available data feeds from all of the Adobe Experience Cloud products. The easiest way to do this is by setting these up to deliver to AWS S3.

Regarding AWS S3…

If you’re unfamiliar with AWS or Amazon S3, it is one of Amazon’s oldest services and is a great place to store your data. Think of it as lots of hard drives connected to a network that looks like a single giant hard drive.

You can access this giant hard drive through any internet connection, and you can browse and access the data through the AWS S3 website, the command line interface, or a plethora of client applications that are out there.

Here’s an example of why this idea is so cool. I own a camera and have taken a lot of pictures throughout most of my adult life. The volume of pictures I took increased dramatically when I got a camera phone. At that point, I realized I had a few problems:

- It was hard to find a hard drive big enough to store everything.

- One of my hard drives failed, and I lost some photos and video – CRAP! How do I avoid that in the future?

- If my house burned down or flooded, I’d potentially lose all of my photos!

- Moving large amounts of pictures or large videos between drives took a long time.

- Knowing which pictures were where and on which drive became an enormous pain.

It turns out, folks that work with Big Data have the same problems. S3 is fantastic because it solves all of these problems:

- S3 virtually “glues” hard drives together to create what appears to be a single, enormous hard drive.

- Amazon stores multiple copies of your data across their collection of hard drives so that a failure doesn’t result in lost data.

- Since your data is off-site, you could experience a catastrophic event (earthquake, flood, etc.) without losing data

- Amazon has found a way to “stripe” data across the collection of hard drives to make access extremely fast

- Since all of your data is all in one place, it’s easier to search and find things

The cost for S3 is far less than it costs me to do on my own.

Regarding failures, they have this to say about the durability of data stored in S3 (from their FAQ):

“If you store 10,000 objects with Amazon S3, you can on average expect to incur a loss of a single object once every 10,000,000 years.”

What about data access and security?

But wait, isn’t my data accessible to the world if it’s in the cloud? Not exactly. There are some tools that you can use to protect yourself:

- You can set permissions of files and folders in S3 to only allow you or other specific users to access the data which is usually good enough. This is the default setting.

- If you’re paranoid, you can encrypt the data by providing a public key to Amazon. If you encrypt the data, it’s up to you to decrypt it using your private key. This type of encryption means that Amazon can’t even see what you have stored there.

What about cost?

It costs me about $20 / month to store 1 TB of data in S3. It costs me nothing to upload my data and hardly costs me anything to download it. To build a network attached storage (NAS) with redundant storage and scale it like Amazon would cost me thousands of dollars (not to mention the cost of maintenance and data redundancy).

How do I create a place for data in S3?

So how do you get an S3 bucket? Since data from the Experience Cloud can contain sensitive information (cost of goods, order totals by day, other sensitive financial information), you’re probably going to want to use an AWS account controlled by your organization (in contrast to your own, personal AWS account). For simplicity sake, I’m going to assume that you’ve already got an AWS account, but if you don’t it’s easy to create a free one here.

Log in and go to S3

Go to https://aws.amazon.com/console and click the ‘Sign into the Console’ button and log in.

After you log in, click the search bar and type S3.

Choose a Bucket

Create or select an existing bucket. To create a bucket, click the blue ‘Create bucket’ button.

After clicking the create button, you’ll be presented with a screen asking for details.

First, you’ll need a bucket name. Bucket names are unique across all of Amazon S3 accounts. Think of it as a domain name for your storage account. Once you’ve chosen it, nobody else can use it unless you give it up (which, for security reasons, you should never do).

The ‘Region’ indicates where in the world your data will be physically stored. If you have a data center close to one of the regions listed in the select box, you’d probably want to chose it. Otherwise, just leave it alone.

If you want to clone settings from another bucket, you can do so by using the last option on this dialog. If this is your first bucket, leave it alone.

If you know what you’re doing and want to customize more settings, click the ‘Next’ button at the bottom right. Otherwise, just click the ‘Create’ button at the bottom left. The default settings are actually pretty good for most people.

Once created, click the link that now shows up in the list. You’ll see an empty bucket. From here you can upload data, create folders, or manage new files that you place here.

Since the default settings lock down your bucket pretty well, you’ll need to create some credentials if you want Adobe to deliver data there.

Create credentials

You’re going to need to provide an ‘Access Key’ and ‘Access Secret’ to Adobe if you want them to be able to drop files on your newly created S3 bucket. To create an account, we need to go to the IAM tool. IAM stands for Identity and Access Management. Its where you create users and manage their credentials.

To get there, click the ‘Services’ tab at the top of the window.

A white pane will appear with a search bar at the top. Type in ‘IAM’ and select the IAM option that appears.

A screen will load with some options for tuning security. From here, you want to click the ‘Users’ link.

Now, click the blue ‘Add User’ button at the top of the screen.

Here, choose a username and check the ‘Programmatic access’ checkbox. Then click the ‘Next: Permissions’ button. Here, we need to give the user access to your S3 bucket. To simplify, we’re going to give this user full access to all buckets. This is obviously not very secure, but for the sake of simplicity, its what we’re going to do here.

Here, we need to give the user access to your S3 bucket. To simplify, we’re going to give this user full access to all buckets. This is obviously not very secure, but for the sake of simplicity, its what we’re going to do here.

Note:

I highly recommend doing some research on this topic, or having the folks that manage AWS for your organization create a Group or Policy (or user) that can be used only for this bucket.

Click the ‘Attach existing policies directly’ box. Then type ‘S3’ into the search box. Finally, select ‘AmazonS3FullAccess’.

After doing this, click the ‘Next: Review’ button at the bottom right corner of the page. I had to scroll down a bit in my window to see this.

After reviewing things, click the blue ‘Create’ button at the bottom right corner. I’m not including a screenshot for this part because its pretty simple. The next screen you see is very important.

This screen shows you your newly created user. There are two bits of information that you need here. Copy and paste the Access Key ID and put it somewhere safe. Next, click the blue ‘Show’ link on the page to expose the ‘Secret Access Key’. Once exposed, copy and paste the value to the same safe place as the Access Key ID. (You can also click the ‘Download .csv’ button to download the credentials in a text file).

This screen shows you your newly created user. There are two bits of information that you need here. Copy and paste the Access Key ID and put it somewhere safe. Next, click the blue ‘Show’ link on the page to expose the ‘Secret Access Key’. Once exposed, copy and paste the value to the same safe place as the Access Key ID. (You can also click the ‘Download .csv’ button to download the credentials in a text file).

Once finished, click the ‘Close’ button at the bottom right. A few things to note about this step:

- Once you click ‘Close’, you’ll never be able to access the ‘Secret Access Key’ in AWS again. This is a security measure. If you ever lose your credentials, you’ll have to regenerate them or create a new user.

- The steps we just completed will give anyone with the ‘Access Key ID’ and ‘Secret Access Key’ access to any data that you have stored in S3. You really need to protect these credentials.

- I really, highly recommend… spending some time to learn about security policies in AWS in order to lock this down more. I’ve chosen to walk you through creation of really open credentials in order to simplify this. This blog post is about getting data into S3, not AWS security best practices. One great source — A Cloud Guru’s AWS videos. They’re fairly cheap to access, and are well organized. They are built around the AWS certifications and can really add to your capability and resume.

Create the data feeds

Wow — that took a while. Now for the fun part.

At this point, you’re going to want to head over here and setup an Adobe Analytics Clickstream Data Feed to deliver to your newly created bucket but with the following tweaks:

- Check the ‘Remove Escaped Characters’ checkbox (unless you want to deal with these on your own)

- Select ‘GZIP’ from the ‘Compression’ drop down

- Choose the ‘All Columns Standard (Oct 2016)’ or ‘All Columns Premium (Oct 2016)’ columns template based on your level of service

- The example later in this post assumes ‘All Standard’. If you are an Analytics Premium customer, you can still select ‘All Columns Standard’ and the example here will work. In the future as you dig more into your analysis and use more columns, you’ll want to upgrade to the ‘All Columns Premium’ setting and adjust the Athena table schema to match the additional columns.

- Make sure that ‘Multiple Files’ is selected. Otherwise, it will be very difficult to use your data in the next step.

When configuring where to send the data, you’re going to be asked for the following:

- Bucket Name

- Folder (if you want one)

- Access Key

- Access Secret

Use the values you created in the previous step to set this up. If everything has been set up right, you should start getting data feed data in your S3 bucket within 24 hours.

Great – I’ve got “Big Data”, but now what?

If you’ve followed me so far… Congratulations. You have a data lake. A large assortment of tools can now access your Analytics data. That wasn’t so bad, was it? Now, let’s see if we can get some value out of it.

My colleague, Matt Moss wrote a wonderful blog post about using SQL to query Analytics data feed data to calculate some basic metrics. Let’s build on that by using AWS Athena to query your Analytics data feeds using SQL. Before we get started, we’ll need to define a schema that matches how the data feed data is structured.

One quick thing…

The way we’ve configured our data feeds will cause multiple types of files to be delivered. This will confuse Athena and we need to clean these extra files out.

Go to the S3 tool, click the bucket name, (then folder name if you used a folder). Use the search tool to find your data feed files (usually have a .gz post fix and are larger than the other files). Select all of these, then use the ‘More’ -> ‘Cut’ option to cut and paste them to a different folder. Remember this folder name — we’ll need it to query the data.

Go to the Athena tool

We’re going to use that nifty ‘Services’ tab at the top again, but this time, type in ‘Athena’ into the search box and click the option that pops up.

Amazon has created a nifty tutorial that covers some basics on how to use Athena. I’ve gone through it and its actually not bad. I recommend you complete it first, and come back to this when you’re done. Things will make a little more sense.

Configure a new database and table

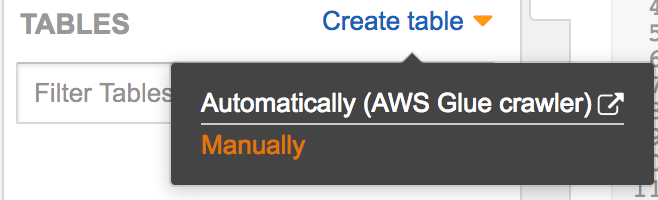

To create a new database and table, click the ‘Create table’ link in the left pane and select the ‘Manually’ option.

On the next screen, you can create a database by simply entering the database name, or you can select an existing database (perhaps one you created during the tutorial). Choose an appropriate table name, and enter the S3 location of your data. Make sure you’ve got a trailing slash here – Athena will complain if you don’t have one.

In the example below, I’ve loaded my data feed data in a bucket called ‘datafeedtoolbox’, and a folder called ‘athena_project’.

Once you’ve finished configuring these options, click the blue ‘Next’ button.

The next screen asks you to specify a data format. Analytics data feed data is by default in tab format.

Here you may be thinking “TSV? I thought I configured the data feed to deliver in GZIP format?”. Well, you’re not wrong. It turns out Athena can read (albeit more slowly) from GZIP’ed data.

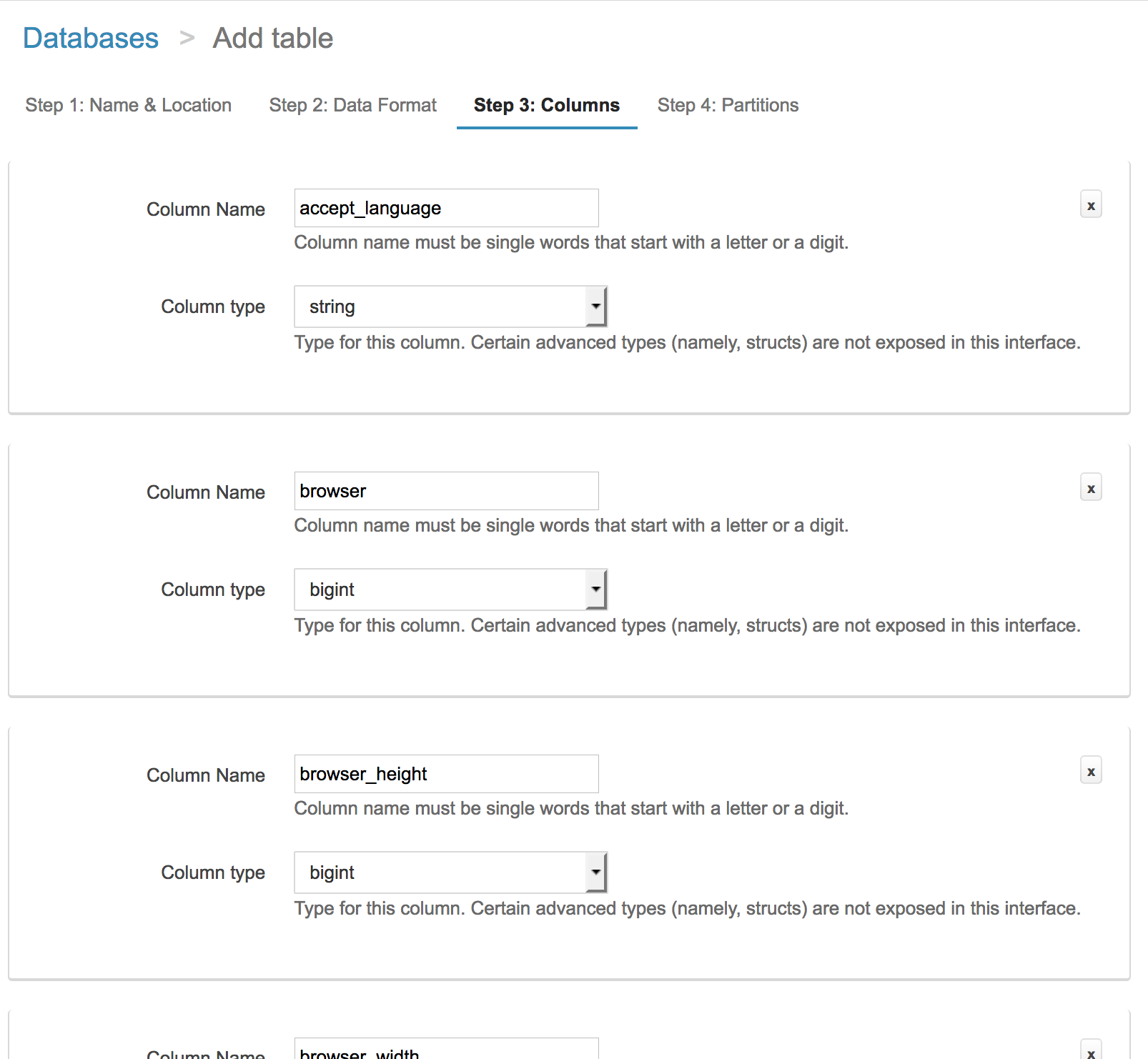

The next step is the fun part. We need to tell Athena what the data feed data looks like. We’re going to use the ‘Bulk add columns’ because its the easiest for this situation. There is a button near the bottom of the screen that will bring up the tool.

It expects the ‘schema’ to be a list of column names and data types. These types are native to Athena and also share characteristics with other relational databases. If you’ve used Oracle or Mysql/MariaDB, these will look familiar. The data types we’re going to use for our data feed are:

- TINYINT – 8 bit signed integer (-127 to 127)

- SMALLINT – 16 bit signed integer

- INT – 32 bit signed integer

- BIGINT – 64 bit signed integer

- STRING – I don’t know what the width of this data type is, but it seems to be rather large (64k?)

- DECIMAL – Useful for storing things like currency

- BOOLEAN – 1/0

- DATE – Useful for storing dates

My feed uses the Analytics All Columns Standard (Oct 2016) schema. I’ve taken a few hours and created a schema that you can paste into the ‘Bulk Add Columns’ tool. You’re welcome to tweak it to work with your schema.

Once you click ‘Add’, it will take about 1-2 minutes to parse this into the GUI. When complete, you’ll have a really long screen that looks kinda like this:

Scroll all the way down to the bottom of the window (it’ll take a while), and click the blue ‘Next’ button. The next screen will load and ask if you want to use partitions. This step is optional and for simplicity’s sake, let’s just click ‘Create table’.

Once the next screen loads, you’re ready to rock.

Query some data!

You can now use a test query to see if your data is accessible to Athena.

SELECT concat(post_visid_high,’-‘,post_visid_low) visid, visit_num, visit_page_num

FROM analytics_datafeeds

LIMIT 100

Here is some example output:

Conclusion

This is an important first step on your big data journey. Using the Athena service with S3 is only the beginning. Having your data available in S3 allows anything to access it.

- You can load your data into RedShift if you’d like…

- You can build a Hadoop cluster in AWS and have it read data from this very same S3 account

- You can setup a Spark or SparklyR cluster and use it to process your data in S3

- You can build a simple Java app using Amazon’s Java SDK and access your data however you want

- You can access it from a commandline

- Many other options…

I hope this gets you excited to get going. As always, please let me know if you have any questions or suggestions for a future topic.